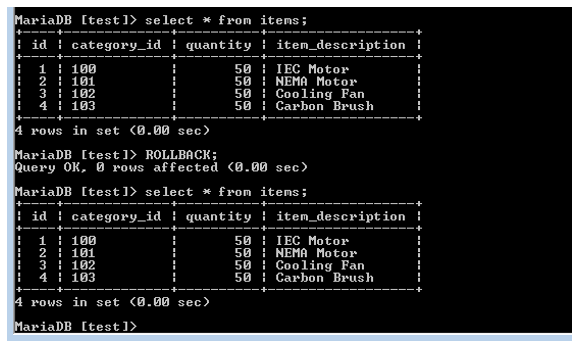

(0x80004005): SQLite errorĪt 3.Reset ( stmt) in :0Īt 3.Step ( stmt) in :0Īt .NextResult () in :0Īt …ctor ( cmd, behave) in :0Īt (wrapper remoting-invoke-with-check) …ctor(,)Īt .ExecuteReader ( behavior) in :0Īt .ExecuteNonQuery () in :0Īt .Commit () in :0Īt. (System.Boolean isDisposing) in :0Īt. () in :0Īt .BackupHandler.RunAsync (System.String sources, filter, token) in :0Īt ( task) in :0Īt .BackupHandler.Run (System.String sources, filter, token) in :0Īt +c_Displa圜lass14_0.b_0 ( result) in :0Īt .RunAction (T result, System.String& paths, & filter, System.Action`1 method) in :0Īt .Backup (System.String inputsources, filter) in :0Īt (+IRunnerData data, System.Boolean fromQueue) in :0 Does anyone know what could be wrong and any possible solutions?ħ:18 PM: Failed while executing “Backup” with id: 1 I’ve tried moving the database to a larger disk, I’ve tried setting the tmpdir in the web gui to the same larger disk. The latest log I have with the error is posted below. SQLite error cannot commit - no transaction is activeĪnd I have no idea how to solve it. I used the AUR package here: AUR (en) - duplicati-latest Because it can become rather tricky to collect pending UPDATE and INSERT statements somehow, this will usually leave you with a tradeoff between performance and exclusive locking.I’m running Duplicati under Manjaro ARM on a Raspberry Pi 4 with 8GB of RAM. When concurrent access is an issue you will not want to lock your database for that long. Unfortunately, sometimes, computing the changes may take some time. So it makes sense to bundle many changes to the database into a single transaction by executing them and the jointly committing the whole bunch of them. Exclusively locking the databaseĪs already mentioned above, an open (uncommitted) transaction will block changes from concurrent connections. Note: While both resources refer to INSERT, the situation will be very much the same for UPDATE for the same arguments. It's written in C, but the results would be similar would one do the same in Python. It is absolutely helpful to understand the details here, so do not hesitate to follow the link and dive in. But it will only do a few dozen transactions per second. It is already noted as a FAQ:Īctually, SQLite will easily do 50,000 or more INSERT statements per second on an average desktop computer.

The performance of database changes dramatically depends on how you do them. When a large number of changes is to be done, two other aspects enter the scene: Performance So it depends whether you can live with the situation that a cuncurrent reader, be it in the same script or in another program, will be off by two at times. Sql = 'insert into "mytable"(data) values(17)'

Rows = conn2.cursor().execute(sql).fetchall()Ĭur.execute('update "mytable" set data = data + 1 where "id" = ?', (rowid,))

# simulate another script accessing tha database concurrently # functions are syntactic sugar only and use global conn, cur, rowid "id" INTEGER PRIMARY KEY AUTOINCREMENT, - rowid You can investigate what happens by running the following script and investigating its output: import os Because of the limited concurrency features of sqlite, the database can only be read while a transaction is open. Unless it is committed, it remains visible only locally for the connection to which the change was done. This is what everybody thinks of at first sight: When a change to the database is committed, it becomes visible for other connections. Whether you call mit() once at the end of the procedure of after every single database change depends on several factors.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed